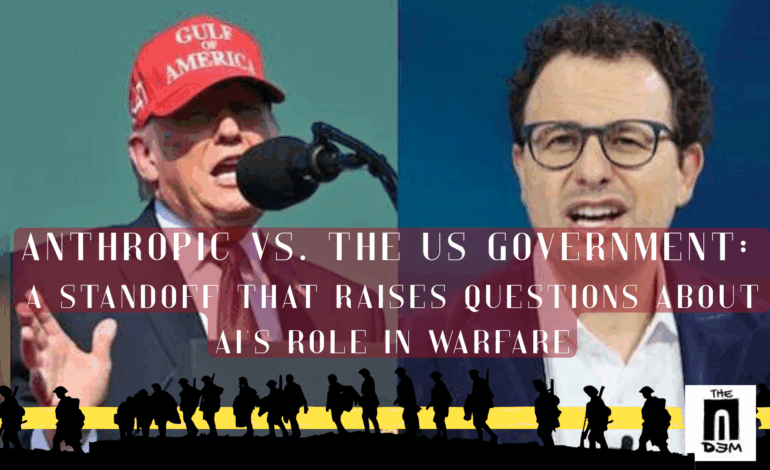

Anthropic vs. the US government: A standoff that raises questions about AI’s role in warfare

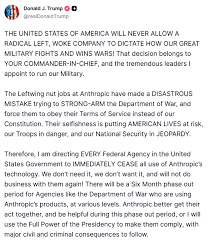

An extraordinary dispute over the role of AI in warfare took place between the AI company Anthropic and officials in Washington in the final week of February. President Donald Trump directed that all federal agencies halt the use of Anthropic’s AI systems. In a post on Truth Social, Trump accused the company of attempting to “strong-arm” the US Department of Defence (which was recently rebranded as the Department of War). “The United States of America will never allow a radical left, woke company to dictate how our great military fights and wins wars,” the president wrote. The order came after the Department of Defence decided that Anthropic posed a national security “supply chain risk.”

Trump’s Post on Anthropic

What is at stake here are some important questions for both policymakers and technologists. Who has the right to control the use of powerful AI in warfare? The companies that build it or the government that deploys it? Furthermore, it foregrounds the importance of public oversight and effective social control over an all-powerful technology.

The conflict had been brewing for days. Earlier that week, Defence Secretary Pete Hegseth warned Anthropic’s CEO, Dario Amodei, that the Pentagon would take strict actions unless the company granted the military full access to its AI models for “all lawful uses.”

Anthropic’s CEO Dario Amodei

Anthropic’s AI models incorporate a few strict guardrails designed to prevent certain military uses. These restrictions include using these systems for domestic mass surveillance and fully autonomous weapons that do not require human intervention. These stipulations interfered with operational requirements, defence officials argued. The military is increasingly relying on AI to process intelligence, identify targets, and aid battlefield decision-making. Anthropic’s safeguards could hinder the effective integration of AI.

Anthropic, however, refused to concede. And it filed two lawsuits against the Pentagon, claiming that the “supply chain risk” designation violates free speech and due process rights, as well as harms its reputation.

Reasons for Anthropic’s refusal

This standoff was curious because the company was already deeply ingrained in the US national security ecosystem.

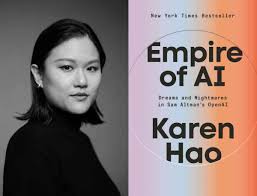

Anthropic’s founders are former OpenAI researchers who left because they couldn’t agree on how to handle AI safety. It sees itself as a public-benefit corporation that prioritizes safety and reliability. However, like all other major AI firms, it also eventually entered into the race for market dominance, including defence. As technology journalist Karen Hao wrote in her book Empire of AI: Dreams and Nightmares in Sam Altman’s OpenAI, the company “showed little divergence from OpenAI’s approach, varying only in style but not in substance.”

Karen Hao pictured with book Empire of AI: Dreams and Nightmares in Sam Altman’s OpenAI

Anthropic’s Claude models were integrated into intelligence analysis and battlefield planning systems. It partnered with Palantir Technologies and Amazon Web Services to deliver AI capabilities to US intelligence and defence agencies. Last year, the Department of Defence awarded the company a $200 million contract to prototype AI tools for national security. Earlier this year, The Wall Street Journal reported that the US military had used Claude during a covert operation to kidnap Nicolás Maduro from Venezuela—the first publicly known instance of the Pentagon deploying generative AI in a classified mission.

The current dispute was sparked by concerns about a few safeguards that the company considers critical, rather than broad ethical objections. In a statement on discussions with the Department of War, Dario Amodei emphasized that his company supports the use of AI to strengthen US national security. According to him, Claude systems are already being used inside defence agencies for tasks including intelligence analysis, cyber operations, and operational planning.

However, Anthropic set a red line in two areas: mass domestic surveillance of American citizens and fully autonomous weapons. It believes that these capabilities are beyond the reliability of current AI systems and pose a threat to democratic practices. But at the same time, it also insisted that these guardrails are not intended to dictate military strategy. Rather, they are necessary precautions to prevent the misuse of increasingly powerful AI systems—a position that has put it on a collision course with a government determined to retain full control over technologies it sees as central to the future of warfare.

A rival steps into the void and faces a backlash

As Anthropic exited, rival OpenAI was quick to fill the vacuum. OpenAI CEO Sam Altman announced a deal with the Department of Defence with an “any lawful use” clause that Anthropic refused to accept. This will effectively allow the government to determine how the systems may be deployed. But he said that OpenAI still maintains internal limits on certain applications, including mass surveillance and fully autonomous weapons.

OpenAI CEO Sam Altman

This move created a lot of backlash from employees and the AI community. According to some reports, more than 1.5 million users have left ChatGPT in less than 48 hours after this announcement.

An open letter titled “We Will Not Be Divided,” signed by hundreds of current and former employees of Google and OpenAI, urged AI companies to resist government pressure to deploy their technology for mass surveillance or autonomous weapons. As AI systems become embedded in our daily lives, the choices companies make regarding their use will have profound consequences for democratic freedom. Signatories wanted AI firms to take a unified stand against technology that enables mass government surveillance or delegates life-and-death decisions to machines. They warn that without these ethical boundaries, AI risks becoming a powerful and dangerous instrument of social control.

In an internal meeting with employees, Sam Altman acknowledged that the timing of the announcement appeared “opportunistic” and had generated negative publicity. Speaking later at an investor conference, Sam Altman said decisions about how AI should be used in national defence should be made by elected officials, not technology companies. The democratic process, he argued, should determine the rules for deploying AI—even if that process is imperfect.

Silicon Valley and the Pentagon

The relationship between technology companies and the defence establishment has always been one of cooperation and unease. Many of the technologies that underpin today’s digital economy—from the internet to GPS—originated in the US defence-funded research during the Cold War, much of it coordinated through agencies such as the Defence Advanced Research Projects Agency.

As the consumer tech industry expanded in the 1990s and 2000s, however, many companies sought to distance themselves from the military establishment. Firms such as Google and Microsoft built vast commercial empires serving billions of users worldwide. Also, parts of their tech workforce grew increasingly wary of involvement in warfare or surveillance programs. Those tensions came to the surface in 2018 with Project Maven, a US Department of Defence initiative that used AI to analyze drone footage. Thousands of employees protested Google’s participation, forcing the company to cancel the contract.

Microsoft has long faced criticism from employees and activists for its role in Israel’s siege of Gaza. It revoked access to Azure and AI services within the Israeli Ministry of Defence in September 2025, following an internal review that discovered evidence of their use for mass surveillance.

At the same time, the strategic importance of AI has drawn technology companies back into closer collaboration with the defence establishment. As part of its efforts to maintain a technological advantage over rivals like China, the Pentagon has sought partnerships with leading developers of advanced computing systems. Companies are increasingly collaborating with government and defense agencies on cloud infrastructure, cybersecurity, and AI capabilities. These partnerships provide them lucrative contracts and the opportunity to shape the future of national security technology infrastructure.

However, long-standing concerns about Silicon Valley’s ethical boundaries in modern warfare continue to exist.

Beyond safeguards vs. sovereignty debate

Anthropic has drawn a line by refusing to provide the military with a blank check for “all lawful uses.” OpenAI was only too happy to cross it. The debate appears to revolve around the state’s right to assert sovereignty over technology developed by private corporations for military purposes. But there’s more to it.

The fierce backlash from tech workers and the community demonstrates that the industry is once again deeply divided on the ethics of modern combat. As AI firms compete for lucrative national security contracts, a fundamental tension has emerged. Who defines the moral limits of our most powerful technology: the researchers who understand its inner workings, the companies that manufacture it, or the elected officials who want to use it on the battlefield?

Robert Oppenheimer and several other scientists who led the development of the nuclear bomb during WWII faced a terrible choice—the moral weight of global devastation or groundbreaking scientific achievement. Many scientists and commentators now believe that AI’s rapid advancement has created a new “Oppenheimer moment.” For instance, Geoffrey Hinton, the “Godfather of AI,” left his high-level position at Google to raise the alarm. He pioneered artificial neural networks, the foundational technology of modern AI. He warns that the surprising pace of this technology now poses several primary threats to humanity: mass unemployment, malicious exploitations, autonomous lethal weapons, and existential risk.

Geoffrey Hinton (“Godfather of AI”)

In a more cautious policy climate, mounting warnings about the risks of advanced AI could have resulted in stronger safeguards. Instead, many of the regulatory efforts are being dismantled. The changes largely benefit powerful government actors and major technology companies.

For obvious reasons, this tendency is most visible in the US, with its Make America Great Again plan. The Trump administration has framed AI dominance as critical to US national security and economic power, especially in strategic competition with China. To accomplish this, the White House has moved away from the restrictive regulatory approaches pursued by previous administrations. The government has also launched an ideological campaign against “woke AI,” accusing certain AI models of incorporating left-leaning, ideological biases, particularly in diversity, equity, and inclusion initiatives. It is not surprising that the president used “woke AI” accusations in his current conflict with Anthropic.

Overall, the US government is attempting to coerce technology companies into closer alignment with its ideological framework. It includes serving authoritarian and imperial interests under the guise of national interest. The standoff between Anthropic and the US government, as well as the events that followed, should be viewed in light of these shifts.

It’s not just about state sovereignty versus corporate interests. It is also about the government’s unwillingness, motivated by self-interest, to take a sane approach to using technology, which is a double-edged sword.